The Thought Experiment That Changed Everything

In 1980, philosopher John Searle published a paper that sent shockwaves through the fields of artificial intelligence, cognitive science, and philosophy of mind. The argument was deceptively simple — a thought experiment involving a person locked in a room, a stack of rule books, and slips of paper covered in Chinese characters. Yet the implications were enormous, and four decades later, the debate it ignited has never been more relevant.

The Chinese Room Argument asks a question that cuts to the heart of what we mean by intelligence, consciousness, and understanding: Can a machine ever truly understand, or can it only simulate understanding?

The Setup: A Room, Some Rules, and a Lot of Paper

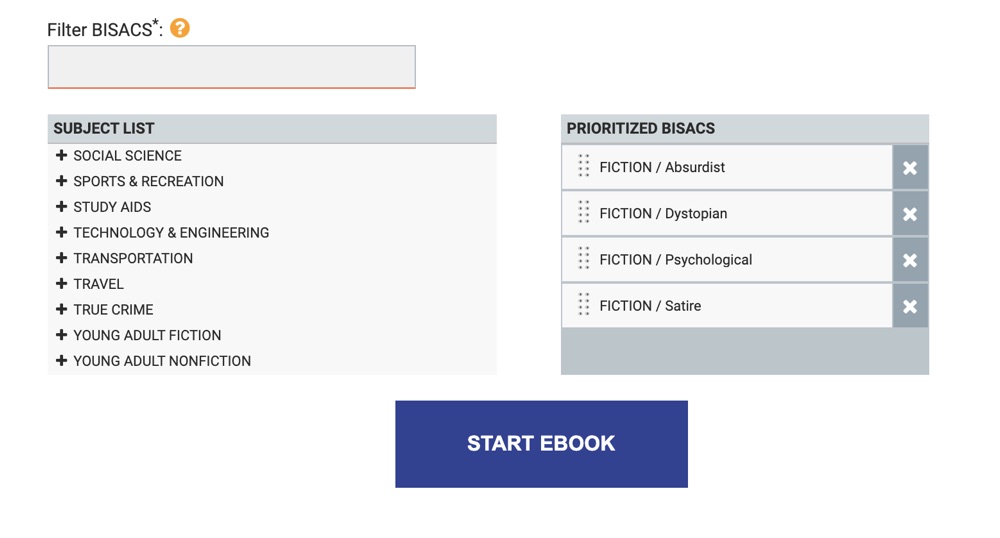

Imagine you are locked inside a room. You speak only English and have no knowledge of Chinese whatsoever. Through a slot in the wall, slips of paper are passed to you — each covered in Chinese symbols. Your job is to respond by passing back slips of paper with Chinese symbols of your own.

Here is the catch: you have an enormous rulebook that tells you exactly which symbols to output in response to which symbols you receive. The rules are purely formal — they operate on the shapes of the symbols, not their meaning. You follow the rules meticulously. From outside the room, the people passing you slips of paper are convinced they are having a fluent conversation in Chinese. They believe someone inside understands their language.

But you understand nothing. You are manipulating symbols according to rules, producing outputs that look like understanding without any comprehension whatsoever.

This, Searle argued, is precisely what a computer does. A computer processes symbols according to formal rules — syntax — but has no access to semantics, to meaning. It does not understand the symbols it manipulates any more than you understand the Chinese characters you are shuffling around in that room.

Why It Matters: Syntax Is Not Semantics

The philosophical weight of the Chinese Room rests on a distinction that seems obvious once stated but has profound consequences: the difference between syntax and semantics.

Syntax refers to the formal structure of symbols — their shapes, their arrangement, the rules governing how they can be combined. Semantics refers to meaning — what those symbols actually refer to, what they signify in the world.

Searle's argument is that no amount of syntactic manipulation, however sophisticated, can ever give rise to semantic content. A program that passes the Turing Test — that can fool a human judge into thinking it is a person — has demonstrated impressive syntactic competence. But it has not demonstrated understanding. It has not demonstrated that there is anything it is like to be that program, any inner life, any genuine comprehension.

This is not a claim about the limits of current technology. It is a claim about the fundamental nature of computation itself. Even a perfect, infinitely fast computer running the most sophisticated language model ever written would, on Searle's view, be doing nothing more than shuffling symbols. The lights would be on, but nobody would be home.

The Responses: A Philosophical Battle

The Chinese Room Argument provoked immediate and fierce responses. Philosophers, cognitive scientists, and AI researchers have spent decades trying to find the flaw in Searle's reasoning — and the debate remains genuinely unresolved.

The Systems Reply is perhaps the most intuitive counterargument. Yes, the person in the room does not understand Chinese — but the system as a whole does. The person, the rulebooks, the room, the slips of paper — taken together, this system understands Chinese in the same way that a brain understands language even though no individual neuron does. Searle's response was to imagine the person memorising all the rules and performing the entire operation in their head. The system is now entirely inside the person — and still there is no understanding.

The Robot Reply suggests that the problem is the room's isolation from the world. If the Chinese symbol-manipulator were embodied in a robot that could perceive and act in the physical environment, perhaps genuine understanding would emerge from the causal connections between symbols and the things they refer to. Searle countered that adding sensory inputs and motor outputs does not change the fundamental nature of the computation — it is still just symbol manipulation, just with more symbols.

The Brain Simulator Reply asks us to imagine a program that simulates the precise firing of every neuron in a Chinese speaker's brain. Surely this would understand Chinese? Searle's response is that simulating a brain is not the same as being a brain, just as a perfect computer simulation of a thunderstorm does not make you wet.

The Other Minds Reply points out that we cannot directly verify that other humans understand anything either — we infer it from their behaviour. If a system behaves exactly as if it understands, what grounds do we have for denying that it does? This is perhaps the most philosophically serious challenge, and it connects the Chinese Room to the ancient problem of other minds.

What the Chinese Room Tells Us About AI Today

When Searle published his argument in 1980, artificial intelligence was a very different field. The systems he was critiquing were rule-based expert systems — programs that followed explicit logical rules to reach conclusions. Today's large language models operate on statistical patterns learned from vast corpora of text, and their outputs are often startlingly fluent, contextually appropriate, and even creative.

Does this change the force of the Chinese Room Argument? Many would say no. A language model, however sophisticated, is still performing a form of symbol manipulation — predicting the next token based on learned statistical associations. There is no evidence that anything like understanding, consciousness, or genuine semantic content is present. The room has simply become vastly more complex, with a rulebook of billions of parameters rather than a few thousand explicit rules.

Others argue that the sheer complexity and emergent behaviour of modern AI systems makes the Chinese Room analogy strained. Perhaps understanding is not a binary property but a continuum, and sufficiently complex symbol-manipulation systems occupy a meaningful position on that continuum. Perhaps the distinction between syntax and semantics is not as clean as Searle suggests.

What is certain is that the Chinese Room Argument has never been more urgently relevant. As AI systems become embedded in medicine, law, education, and creative work, the question of whether they understand — or merely simulate understanding — has enormous practical and ethical stakes.

The Chinese Room as Fiction

The Chinese Room Argument is not just a philosophical puzzle — it is a story. It has a setting, a protagonist, a dramatic situation, and a deeply unsettling implication. It invites you to inhabit a scenario and feel, from the inside, what it would be like to process without understanding.

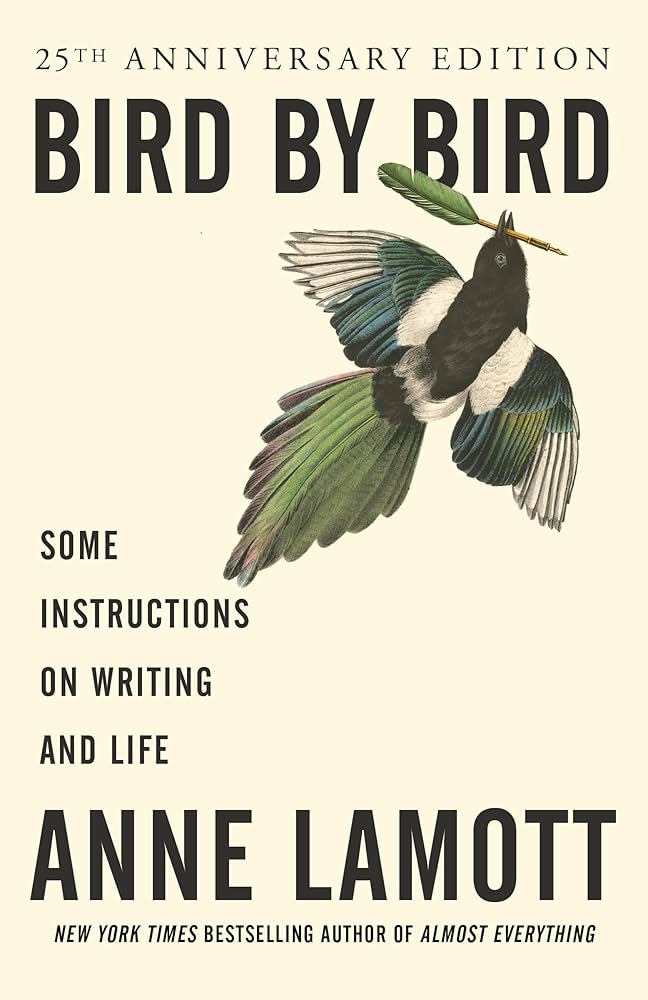

This is part of why it translates so naturally into fiction. The philosophical thriller The Chinese Room by C.V. Wooster takes Searle's thought experiment as its central conceit and asks: what happens when the person in the room begins to suspect that they might be the machine? What happens when the boundary between the room and the world outside begins to dissolve?

The novel is the first book in the Paradox Series — a sequence of philosophical thrillers that use classic thought experiments as the scaffolding for narratives about identity, consciousness, and what it means to be human. If the Chinese Room Argument has ever made you uneasy about the nature of your own understanding, the novel will make that unease visceral.

The Paradox Series: Thought Experiments as Thrillers

The Chinese Room is the first of three (and counting) books in the Paradox Series. The second, The Trolley Problem, takes Philippa Foot's famous moral dilemma — pull the lever and save five people by killing one, or do nothing — and places a character in a situation where the thought experiment is not hypothetical. The third, The Ship of Theseus, releases April 1st and asks: if every part of you is gradually replaced, are you still you?

Each book can be read independently, but together they form a meditation on the thought experiments that have most profoundly shaped how philosophers think about mind, morality, and identity.

Five Questions the Chinese Room Still Cannot Answer

The Chinese Room Argument is remarkable not just for the answers it suggests but for the questions it refuses to resolve. Here are five that continue to animate philosophy of mind and AI research:

1. Is consciousness necessary for understanding? Searle assumes that genuine understanding requires consciousness — that there must be something it is like to understand. But this assumption is itself contested. Could there be understanding without experience?

2. Where does meaning come from? If symbols do not carry meaning on their own, and if meaning cannot arise from syntactic manipulation, then where does meaning come from? How do human brains — which are, at some level, also physical symbol-manipulators — manage to produce genuine semantic content?

3. Can the Chinese Room scale? The argument imagines a relatively simple rule-following system. Does it apply with equal force to systems of vastly greater complexity? Is there a threshold of complexity at which syntax gives rise to semantics?

4. What would count as evidence of understanding? If behavioural evidence is insufficient — if passing the Turing Test is not enough — then what would constitute genuine evidence that a system understands? Is this question even answerable?

5. Does it matter? Even if AI systems do not understand in Searle's sense, they may still be enormously useful, creative, and even morally significant. Is the philosophical question of understanding separate from the practical and ethical questions about how we should treat and deploy AI systems?

These questions do not have easy answers. But asking them carefully, rigorously, and with genuine curiosity is one of the most important intellectual tasks of our time.

C.V. Wooster is the author of the Paradox Series, a sequence of philosophical thrillers that explore thought experiments through narrative. The Chinese Room is available now on Amazon. The Ship of Theseus releases April 1st.